After reading some posts and blogs on vSphere5 and E1000E performance my curiosity was triggered to see if actually all these claims make sense and how vSphere actually behaves when testing.

Test setup

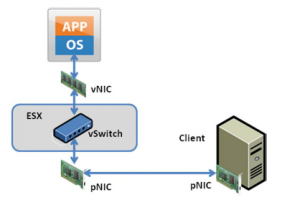

The setup I used is similar as described in http://www.vmware.com/pdf/vsp_4_vmxnet3_perf.pdf. The setup looks like:

Bare metal server (Client): B22-M3, 16GB, 2xE5-2450, 1280VIC

vSphere ESXi 5.0 server: B200-M3, 64GB, 2xE5-2680, 1280VIC

To accommodate the tests, the 1280VIC’s are connected to 2108 IOM’s and we are only using Fabric A / 6248-A.

The VM is configured in the following way (screenshot):

- Local Area Connection: E1000

- Local Area Connection 2: VMXNET3

- Local Area Connection 3: E1000E

- 4GB Memory, 1 vCPU

- Windows 2008R2

Test results

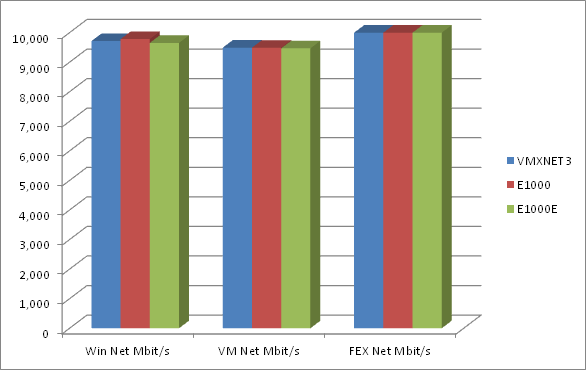

The following is a result of the best performance test I did run

Raw Data

Adapter

Win Net

Win CPU

VM CPU

VM Net

FEX Net

Graph

VMXNET3

9715

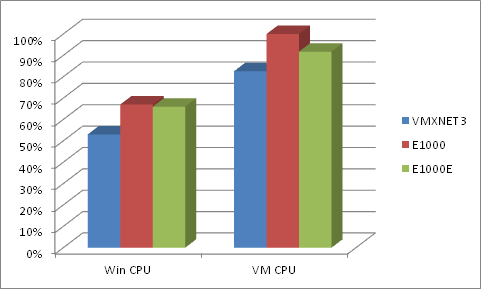

53%

82.57%

9493.67

9.92

link

E1000

9784

67%

118.89%

9491.87

9.99

link

E1000E

9654

66%

91.77%

9469.47

10.0

link

Column explanations:

Column

description

Win Net

Average transmission in Mbit/s on Windows

Win CPU

Average CPU load on Windows measured

VM CPU

%USED counter in esxtop

VM Net

MbTX/s in esxtop

FEX Net

Tx Bit Rate in Gbps as seen by the 2108 IOM module

| Adapter | Win Net | Win CPU | VM CPU | VM Net | FEX Net | Graph |

|---|---|---|---|---|---|---|

| VMXNET3 | 9715 | 53% | 82.57% | 9493.67 | 9.92 | link |

| E1000 | 9784 | 67% | 118.89% | 9491.87 | 9.99 | link |

| E1000E | 9654 | 66% | 91.77% | 9469.47 | 10.0 | link |

| Column | description |

|---|---|

| Win Net | Average transmission in Mbit/s on Windows |

| Win CPU | Average CPU load on Windows measured |

| VM CPU | %USED counter in esxtop |

| VM Net | MbTX/s in esxtop |

| FEX Net | Tx Bit Rate in Gbps as seen by the 2108 IOM module |

Data interpretation

We can clearly see that all adapters can be filled, full line speed. There are small differences but these could very much be due to sampling periods etc…

There is a higher CPU usage seen for E1000 and E1000E adapters, for both WIN CPU and VM CPU. I think however only for E1000 there is a high penalty where for E1000E this stays within acceptable limits.

Disclaimers

I’m not a bench guy neither is this my job, hence these figures are just my personal observation and by no means are they a result of a full professional benchmark. They are however fully reproducible.

The attached graphs do show some dips, I did not further look into them. I know technically why they are there, but did not look into fixing them.

Follow

Follow